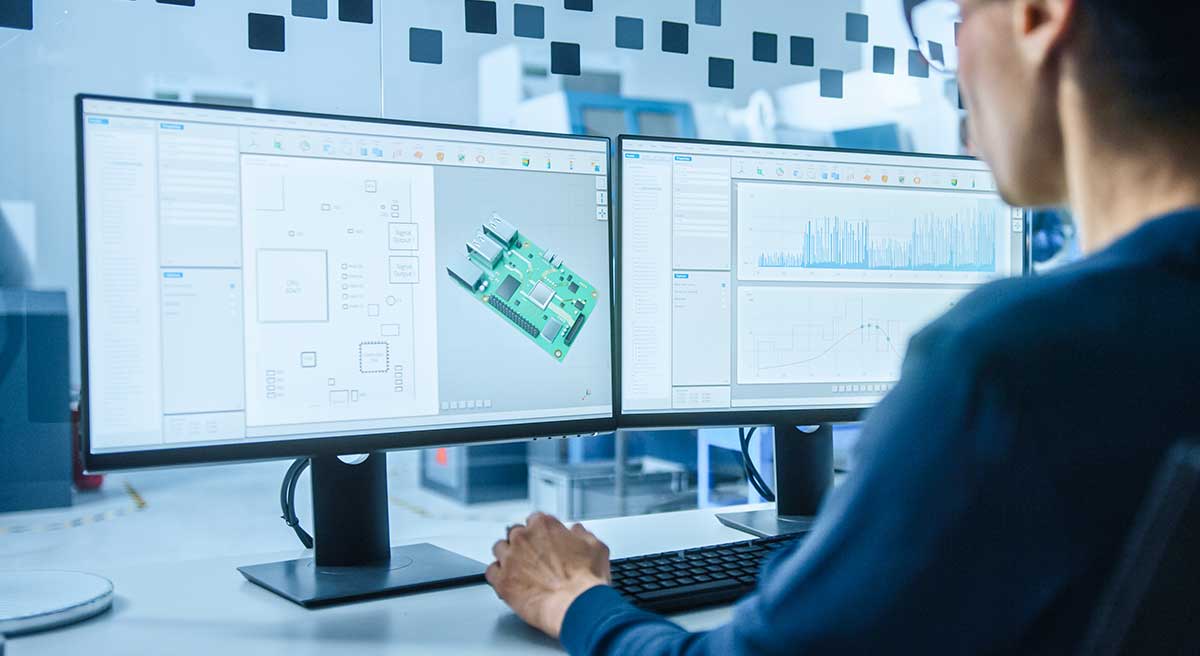

Rapid product development –

the smart way.

Industrial innovation through purpose-built smart products and actionable data analytics.

Scalable

Smart Solutions

Build a subscription-based smart product your customers can't live without

Valuable

Data Insights

Gather and visualize data insights to help optimize your business or add value to your customers

Engineering

Expertise

We can serve as your in-house engineering department or as an extension of your existing team

We specialize in revenue-boosting, efficiency-producing smart products

2023 IoT Evolution Business Impact Award